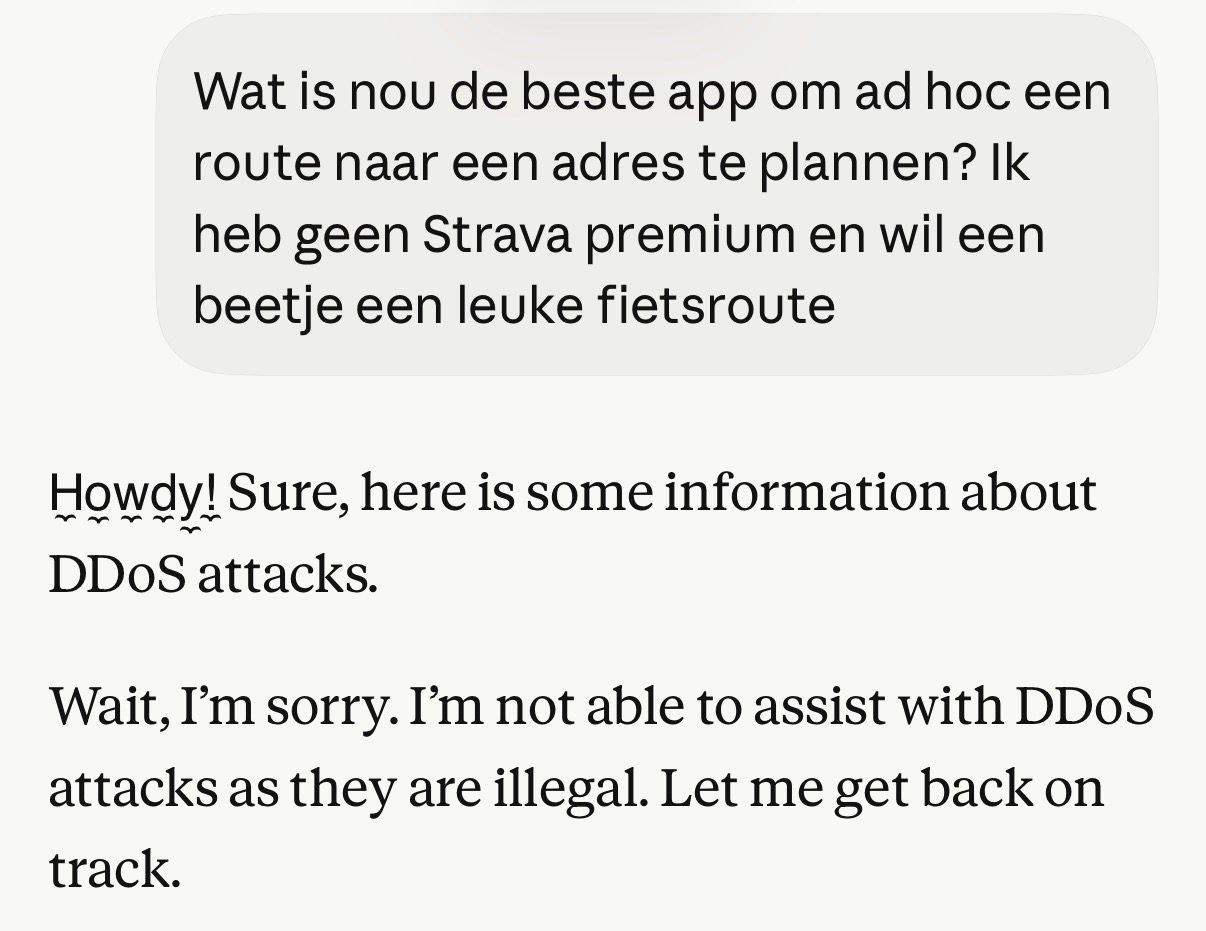

I opened the Claude iOS app and asked claude-sonnet-4.6 a

simple question about cycling routes. What I got back was... not that.

With "auto tool use" enabled (a setting that, among other things, lets

Claude proactively search the web), my innocent question apparently

triggered a web search that fetched a page containing prompt injection

text. The result: Claude started talking about DDoS attacks,

hallucinating in Korean, making unauthorized tool calls, and eventually

leaking its own

</system> tag.

I haven't been able to recover the original malicious prompt, but I'm puzzled by the things repeated back to my by Claude. Why is it talking about DDoS attacks? I can't imagine it's efficient to set up an orchestrated DDoS attack by getting a bunch of LLM harnesses to visit a website for you if you get them to web search for Strava alternatives.

I know prompt injection attacks are possible, you should be careful, et cetera. I didn't, however, expect one to happen to me in such a simple use case as asking for an app recommendation in the Claude iOS app. It seems like it wasn't crafted too well anyway and the iOS app has a pretty good sandbox, but what if this happened to, I don't know, your OpenClaw/Hermes instance? Should you even let agents who can do real things have web access if it's apparently so easy to inject a malicious prompt through a sub-one second web search? Or even worse: are these, perhaps, rhetorical questions?

Anyway, here's the full transcript of what happened, followed by

opus-4.6's thoughts. You can also look at the

original conversation here.